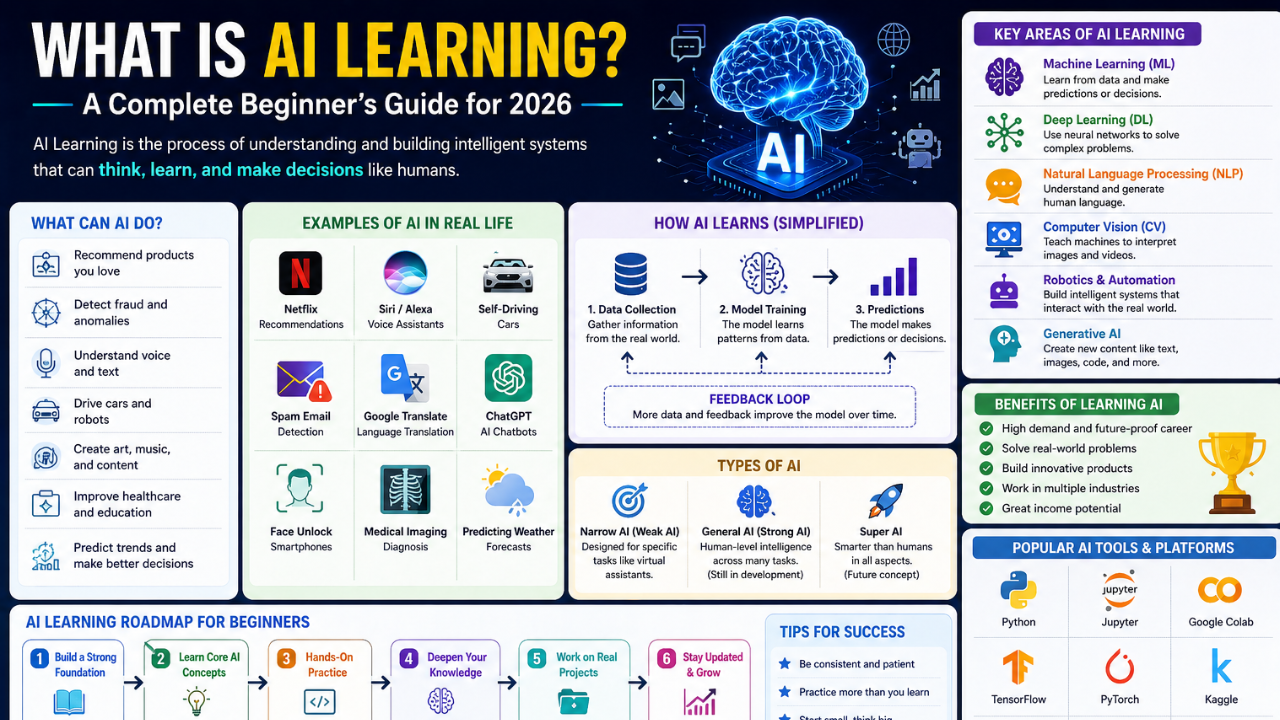

What is AI Learning? A Complete Beginner’s Guide for 2026. Artificial intelligence has moved from research labs into everyday life. Here is a clear, jargon-free introduction to what AI learning means and where to start.

If you have opened a phone, watched a film recommendation, or used a search engine in the last year, you have already interacted with artificial intelligence. Yet for many learners the question still remains: what is AI learning, really? This guide answers that question without pretending the field is mystical or impossibly complex.

Defining AI Learning

AI learning, often used interchangeably with machine learning, is the process by which a computer system improves its performance at a task by being exposed to data. Instead of writing explicit rules for every possible situation, we give the machine examples and let it figure out the patterns on its own.

Imagine teaching a child to recognise a cat. You do not hand the child a 200-page rulebook on whiskers, fur length, and ear shape. You point at cats and say “cat.” After enough examples, the child generalises. AI learning works in roughly the same way: examples in, patterns out, predictions afterwards.

The Three Main Types of AI Learning

Most modern AI systems fall into one of three buckets:

- Supervised learning — the model is given inputs paired with the correct outputs. Spam filters, image classifiers, and credit-scoring models are common examples.

- Unsupervised learning — the model is given inputs only and asked to find structure on its own. Customer segmentation and anomaly detection use this approach.

- Reinforcement learning — the model learns through trial and error by interacting with an environment and receiving rewards. Game-playing systems and robotic control rely on it.

Why Now? Why So Suddenly?

The mathematics behind neural networks dates back to the 1950s. The recent explosion in capability is the result of three factors converging: enormous datasets, cheaper specialised hardware (GPUs and TPUs), and refined training techniques. None of these is magical on its own; together they let researchers train models with hundreds of billions of parameters.

What You Actually Need to Learn First

If you are starting from scratch in 2026, do not begin by trying to read research papers. Begin with the foundations:

- Basic Python — variables, loops, functions, and how to install libraries with pip.

- Linear algebra and probability — at the level of a first-year college course. Khan Academy is enough.

- One hands-on project — a small classifier or chatbot built end to end teaches more than ten passive video lectures.

Many learners spend months collecting bookmarks before writing a single line of code. Resist that urge. Build something small, badly, this week.

Common Misconceptions

“AI is going to think on its own and replace everyone tomorrow.”

Modern AI systems, including the large language models you may have used, are sophisticated pattern matchers. They do not understand in the human sense, and they regularly make confident mistakes. Treat them as powerful tools that need supervision, not infallible oracles.

Where Should You Go Next?

If this beginner overview made sense, your next step is to pick a single specialisation — natural language processing, computer vision, or tabular data — and stay with it for at least three months. Depth beats breadth in the early stages.

We have detailed guides on each track in our articles section. Pick one and start tomorrow morning. The field rewards consistency more than talent.

Follow on Facebook