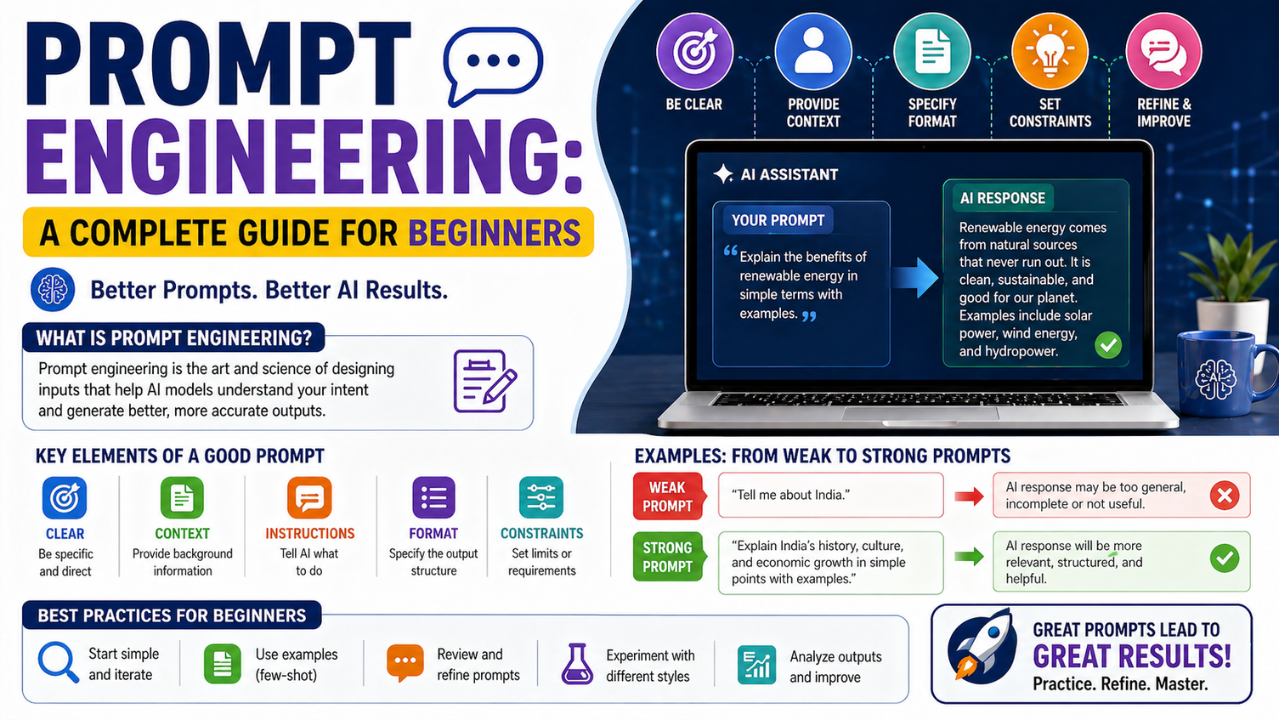

Prompt Engineering: A Complete Guide for Beginners. Prompt engineering is no longer a meme — it is a real, practical skill with patterns that genuinely work. Here is the foundation.

Two years ago, “prompt engineer” was a job title that invited eye-rolls. Today, the ability to consistently get useful output from a large language model is a real skill that separates effective practitioners from frustrated tinkerers. This is not magic. It is a small set of patterns applied with discipline.

The First Rule: Be Specific

Vague prompts produce vague answers. The single highest-impact change most beginners can make is to add specificity: who is the audience, what format is expected, what length, what tone, what constraints?

“Write about machine learning.”

… is roughly a hundred times less useful than:

“Write a 250-word introduction to machine learning for a high-school student who is comfortable with algebra. Use one concrete example. Avoid jargon. End with one question that invites further reading.”

Use Structure

LLMs respond well to structured prompts. A simple but effective template:

- Role. “You are a careful, sceptical research assistant.”

- Task. The specific job to be done.

- Context. Any background information the model would not know.

- Format. Exact output structure expected.

- Constraints. What to avoid, length limits, style notes.

Few-Shot Prompting

One of the most reliable techniques is to show the model a few examples of the input-output pattern you want before giving it the real input. Two or three examples are usually enough. The model picks up on the structure, tone, and depth without needing to be told explicitly.

Chain-of-Thought, Used Properly

For tasks requiring reasoning, asking the model to “think step by step” reliably improves accuracy on multi-step problems. The trick is to use it where it actually helps — math, logic, multi-hop questions — and not as a default for every prompt.

Structured Output

If you intend to use the output programmatically, ask for it in a structured format and use the JSON-mode or function-calling features of the API. Free-form text that you then have to parse is fragile.

Common Anti-Patterns

- Politeness for its own sake. “Please could you possibly…” adds tokens without adding clarity.

- Threats and bribes. “I will tip you $200” was briefly trendy. Modern models do not respond meaningfully to it.

- Overstuffed prompts. Five thousand words of context for a 50-word question rarely improves output.

- Single-prompt thinking. Many problems are better split into a sequence of small, focused prompts.

Evaluation Beats Intuition

Once you are using prompts in any serious application, stop guessing whether they are good. Build a small evaluation set — twenty to fifty examples with known good answers — and run new prompt variations against it. The discipline of measurement is what turns prompt tinkering into prompt engineering.

Where the Field Is Going

Static prompts are slowly being replaced by structured agent frameworks where prompts are generated, tested, and refined automatically. Hand-crafted prompts will not disappear, but the highest-leverage skill in 2026 is increasingly the ability to design and evaluate systems of prompts rather than perfect a single one.

Follow on Facebook