Computer Vision: Real-World Applications That Actually Work. Computer vision has quietly become one of the most deployed branches of AI. A grounded tour of where it works, where it does not, and what to learn.

Computer vision tends to live in the shadow of generative AI in public conversation. In production, it is a different story: vision systems run quietly inside hospitals, factories, banks, transport networks, and your phone’s camera roll. This article surveys the applications that have crossed from “interesting demo” to “real workload.”

The Core Tasks

Most of what you will see in the wild is built on a small set of foundational tasks:

- Image classification — what is in this image?

- Object detection — what is in this image and where is it?

- Segmentation — which exact pixels belong to which object?

- Optical character recognition — reading text from images.

- Pose estimation — where are the joints of a person or the keypoints of an object?

- Vision-language tasks — answering questions about an image, generating captions, or guiding an action.

Where Vision Genuinely Works in Production

- Medical imaging triage. Tuberculosis screening, diabetic retinopathy detection, and mammography assistance are now deployed at hospital scale.

- Manufacturing quality control. Detecting defects on production lines is one of the most reliable applications and a major share of industrial AI revenue.

- Document understanding. Banks, insurers, and government agencies increasingly extract structured data from scanned forms and invoices automatically.

- Agriculture monitoring. Satellite and drone imagery is used to estimate crop health, yield, and pest pressure across enormous areas.

- Retail and inventory. Automated shelf monitoring, planogram compliance, and self-checkout systems.

- Smart-camera applications. From wildlife monitoring to traffic analytics to library book scanning.

Where It Still Struggles

Despite the progress, vision systems remain brittle in some recurring ways:

- Out-of-distribution inputs. A model trained on one factory’s lighting may fail at a sister factory across the road.

- Long-tail recognition. Common objects are easy; rare ones are not. Real-world data is dominated by long tails.

- Adversarial fragility. Small, deliberate perturbations can fool models in ways that should embarrass us.

- Three-dimensional reasoning. Models are improving but still uneven on tasks that require real understanding of geometry.

What to Learn If You Want to Work in Vision

- Solid Python and PyTorch basics.

- The classical computer-vision pipeline — colour spaces, filtering, edges, contours — through a library like OpenCV.

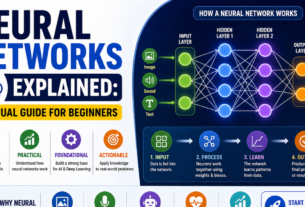

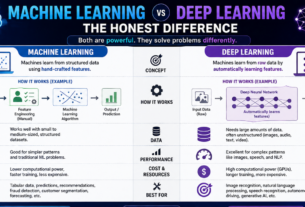

- Convolutional neural networks at a conceptual level, then transformer-based vision models.

- One end-to-end project: dataset collection, training, evaluation, deployment.

- An honest understanding of dataset biases — vision is the field where biased training data has caused some of the most public AI failures.

A Useful Starting Project

Build a small image classifier that recognises the bird species in your local park. It is unglamorous, but it forces you to handle every messy step of a real vision project: collecting data, dealing with class imbalance, evaluating performance honestly, and deploying somewhere others can use it. Many strong careers in this field began with a project of exactly this scope.

Follow on Facebook