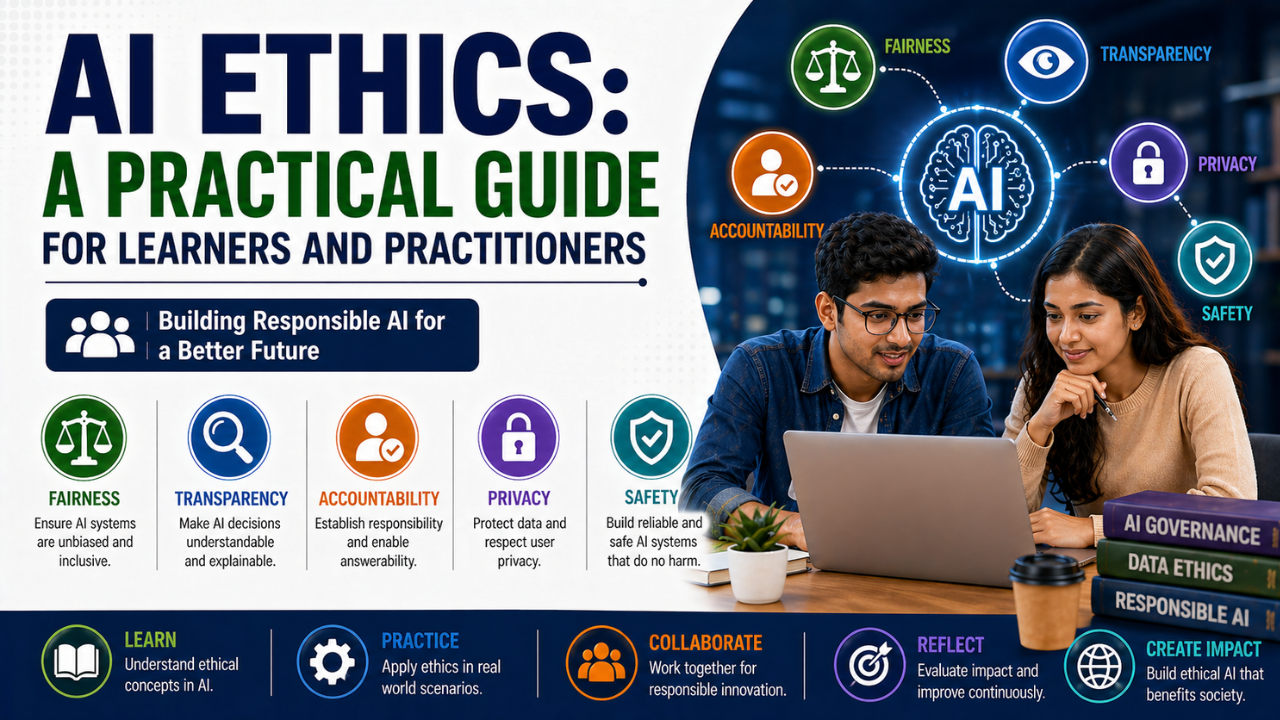

A Practical Guide for Learners and Practitioners: Ethics in AI is not an optional course you take after the technical material. It is part of building things that work for real people. A grounded primer.

Ethics gets a strange treatment in many AI curriculums. It is either skipped entirely or relegated to a single uncomfortable lecture filled with hypothetical trolley problems. Both extremes miss the point. In practice, AI ethics is a continuous engineering and product discipline, and the practitioners who take it seriously build better systems.

Why It Is Not Optional

The systems you build will affect people who do not know them, did not choose them, and cannot easily contest them. A loan model decides who gets credit. A vision model decides who passes airport screening smoothly. A language model shapes what billions of people read each day. The leverage is real, even when the engineer behind it feels like they are just shipping code.

The Recurring Patterns of Harm

- Biased data leading to biased outcomes. A historic dataset that under-represents certain groups will produce a model that under-serves them.

- Inappropriate context transfer. A model trained on one population deployed on another it does not represent.

- Opaque decisions. Affected people unable to understand or contest what happened to them.

- Feedback loops. A predictive system that, once deployed, changes the data it is later trained on.

- Privacy erosion. Models that memorise and emit training data including personal details.

- Concentration of capability. Tools that disproportionately empower a few large actors.

Practical Habits Worth Forming Early

- Look at your data. Not just summary statistics — scroll through samples. Most ethical problems become obvious after twenty minutes of honest inspection.

- Disaggregate your evaluations. Overall accuracy hides subgroup failures. Always check performance across the dimensions that matter.

- Document decisions. Model cards and dataset datasheets exist for a reason. Even a one-page version dramatically reduces future ambiguity.

- Make refusal first-class. Build the option for the system to decline an answer when it is unsure. Confidence is often more dangerous than ignorance.

- Talk to the people affected. Domain experts and impacted users will catch issues no engineer will.

The Frameworks You Should Know

You do not need to memorise every framework, but a working familiarity helps. The OECD AI Principles, NIST’s AI Risk Management Framework, and India’s draft Digital India Bill provisions all converge on a similar core: accountability, transparency, fairness, robustness, and respect for privacy.

What This Looks Like Day to Day

Ethical practice is rarely dramatic. It usually looks like:

- Adding a fairness check to your evaluation script.

- Writing a paragraph in a README explaining what your model should not be used for.

- Pushing back, politely, when a stakeholder asks for a deployment scope that exceeds what your data supports.

- Pausing a release because a subgroup result is troubling.

- Documenting why you chose one threshold over another.

A Note for Students

You will not be the most senior person in any meeting for several years. That does not exempt you from this work. Many of the most important interventions in real AI projects come from the most junior person in the room, asking the question nobody else wanted to ask. Get into that habit while it is still cheap to practise it.

Follow on Facebook