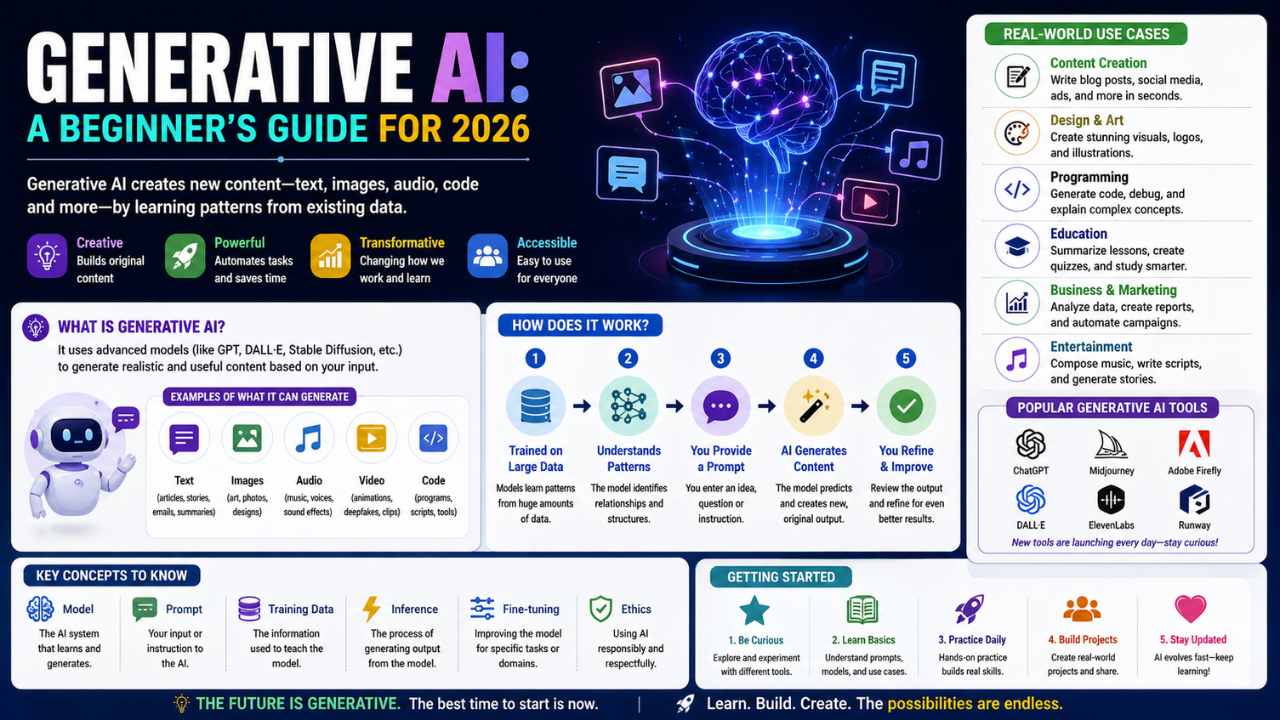

Generative AI: A Beginner’s Guide for 2026. Generative AI is the most visible branch of the field today. Here is what it is, how it works at a high level, and where the limits actually lie.

Generative AI is the part of artificial intelligence that produces new content — text, images, audio, code, video. It is also the part of AI most people have actually used, often without realising it. This guide gives you the conceptual map you need to make sense of the noise.

What Generative AI Actually Does

A generative model learns the statistical patterns in a body of training data and uses those patterns to produce new examples that resemble the originals. A language model learns how words tend to follow each other; an image model learns how pixels tend to arrange into faces, landscapes, and objects.

The crucial point: these models do not understand content the way humans do. They are remarkably good at predicting plausible continuations. Sometimes that looks like reasoning. Sometimes it looks like a confident hallucination.

The Major Families

- Large Language Models (LLMs) — generate text. Examples include the GPT family, Claude, Gemini, and the open-source Llama and Mistral models.

- Diffusion models — generate images and increasingly video. They start from random noise and gradually refine it into a coherent picture.

- Audio generation models — speech synthesis, music generation, voice cloning. Often based on transformer or diffusion variants.

- Multimodal models — accept and produce mixtures of text, image, audio, and video. The frontier of the field in 2026.

How They Are Built (Roughly)

- Pretraining. The model is fed a massive dataset and trained to predict the next token, pixel, or sample. This stage is enormously expensive.

- Fine-tuning. The model is further trained on a smaller, curated dataset to align its behaviour with a specific use case or set of values.

- Reinforcement learning from human feedback (RLHF). Human raters compare model outputs, and the model is trained to prefer the outputs humans prefer.

What They Are Genuinely Good At

- Drafting and rewriting text.

- Summarising long documents.

- Translating between languages.

- Writing routine code and explaining unfamiliar code.

- Producing first-pass design ideas, images, and music.

Where They Still Fail

- Factual accuracy. Confidently inventing information remains a real problem.

- Long-horizon reasoning. Multi-step problems with strict logical chains are unreliable.

- Novel situations. Anything genuinely unlike the training data exposes the limits quickly.

- Math and counting. Has improved but is still uneven.

How to Start Building With Generative AI

- Pick one model API (OpenAI, Anthropic, or Google), or one open-source model you can run locally.

- Build something tiny: a personal note summariser, a recipe rewriter, a question-answering bot over your own documents.

- Learn prompt engineering basics — clear instructions, examples, structured output.

- Once comfortable, look into retrieval-augmented generation (RAG) and agent patterns.

Ethics and Caution

Generative models can create convincing fake content. Anyone working with them seriously should think early about provenance, attribution, and the rights of the people whose work appears in training data. The technology will outrun the regulations for some time. Apply your own judgement.

Follow on Facebook